Setting up an AI foreign language reading companion

The hardest part of reading foreign-language books is not the reading itself—it is looking up words. If I could automate vocabulary lookup while breaking sentences into small, manageable chunks, the barrier to reading in a foreign language would be much lower.

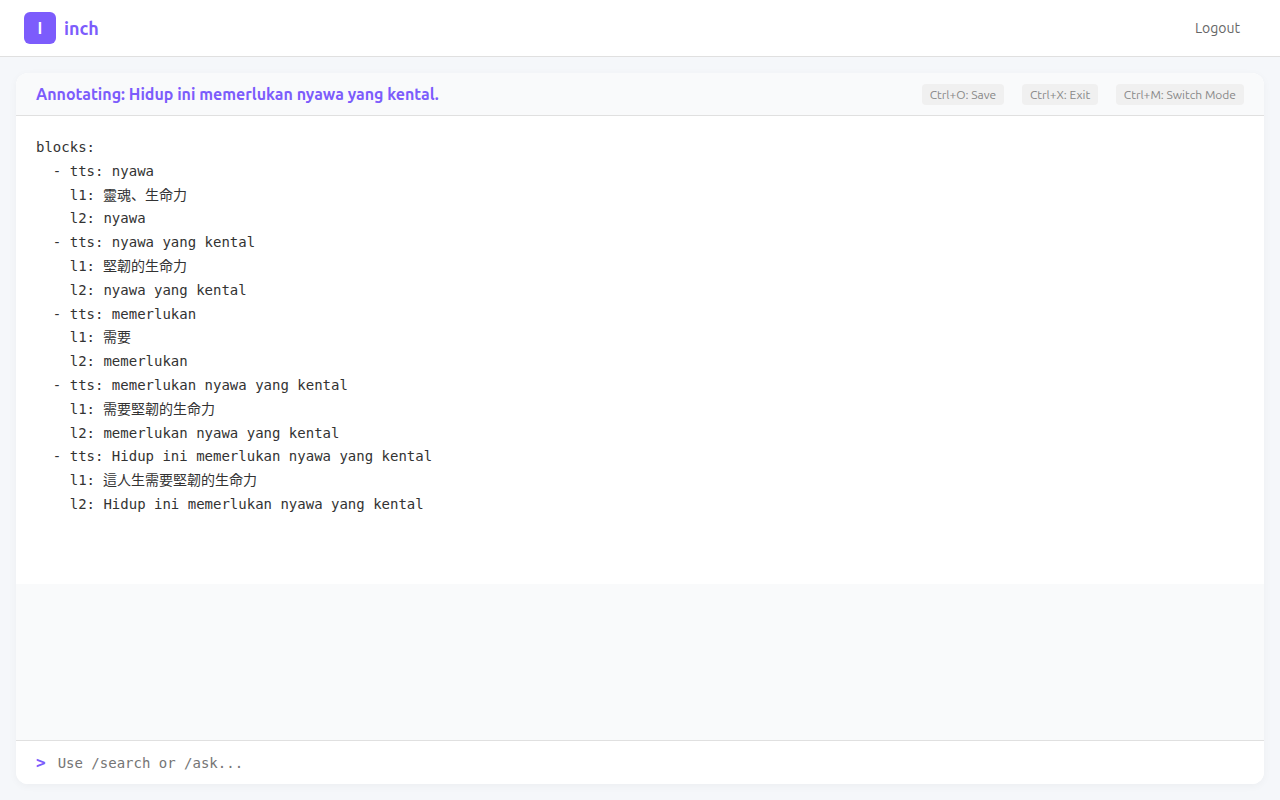

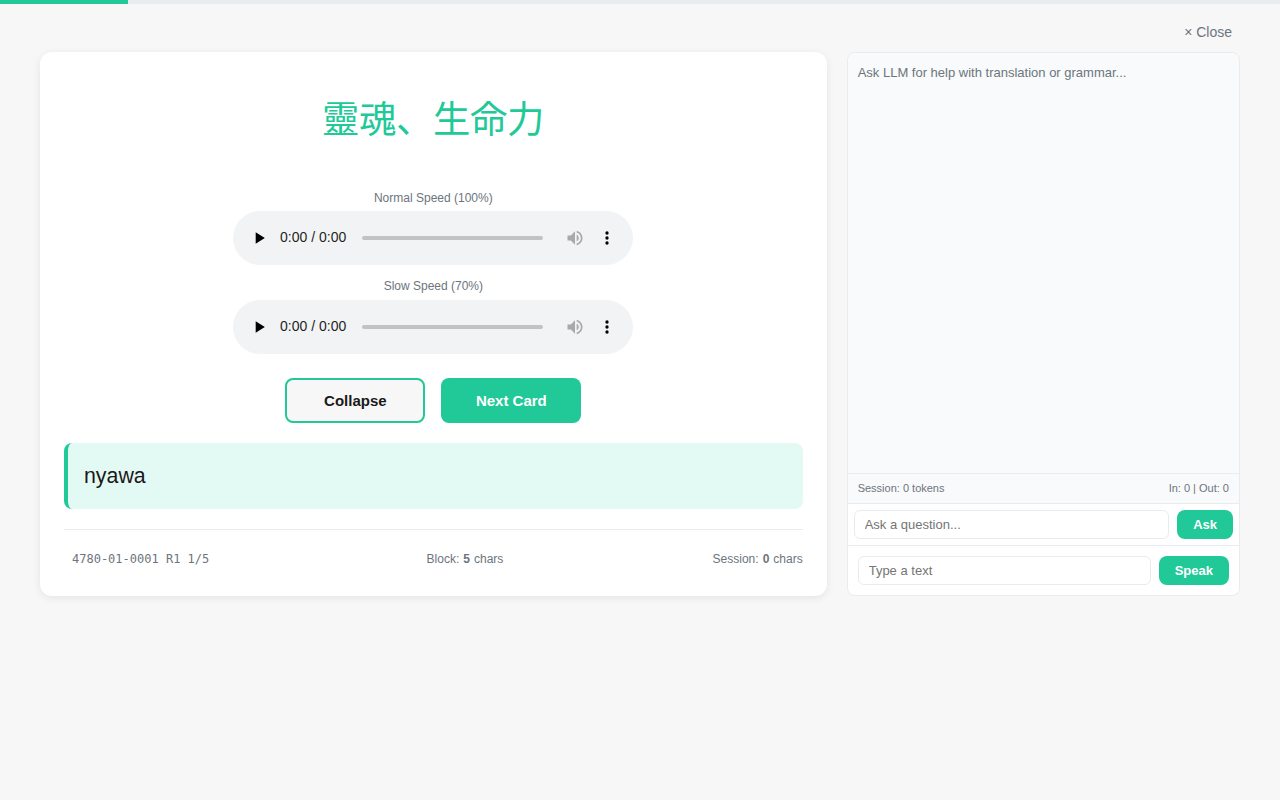

A full foreign sentence played back all at once overwhelms the ear. But hear one core word first, then add a modifier, layering piece by piece until the full sentence comes together—by that point you can follow it. That is the decomposition method I came up with.

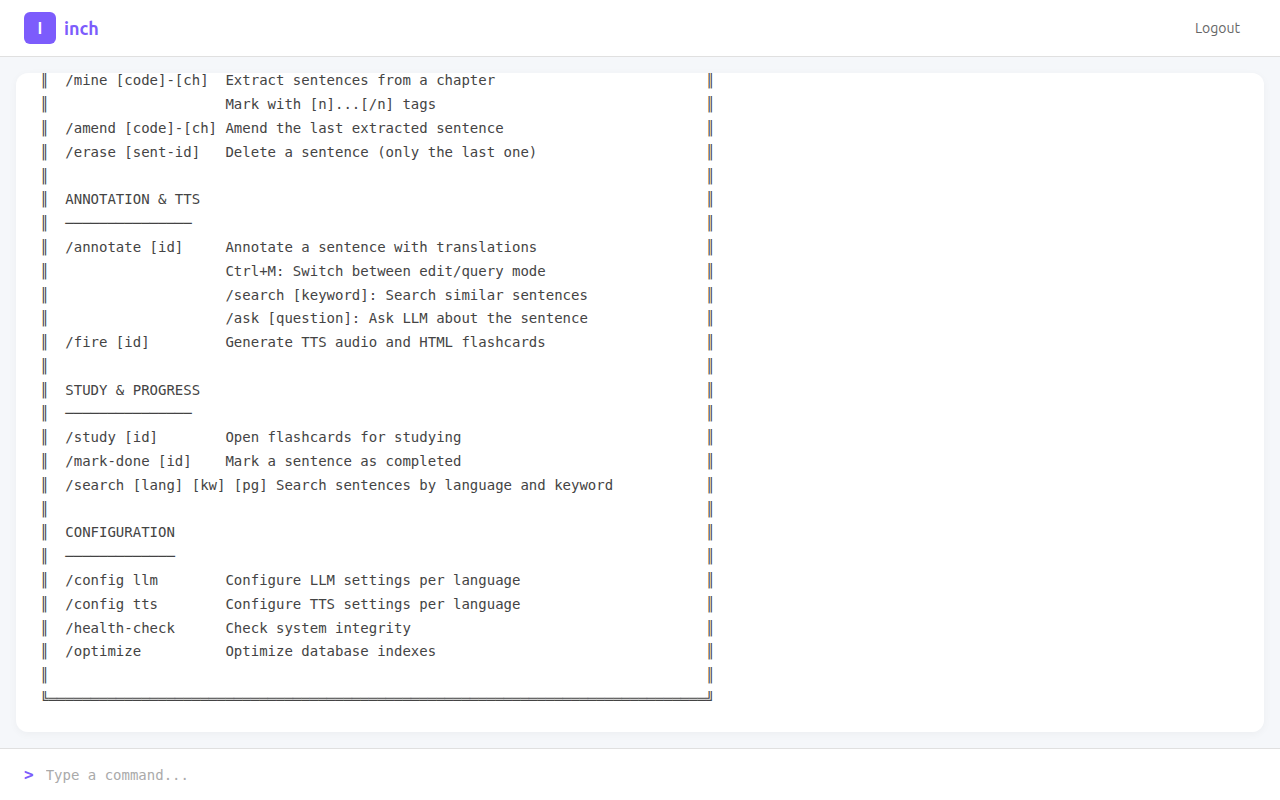

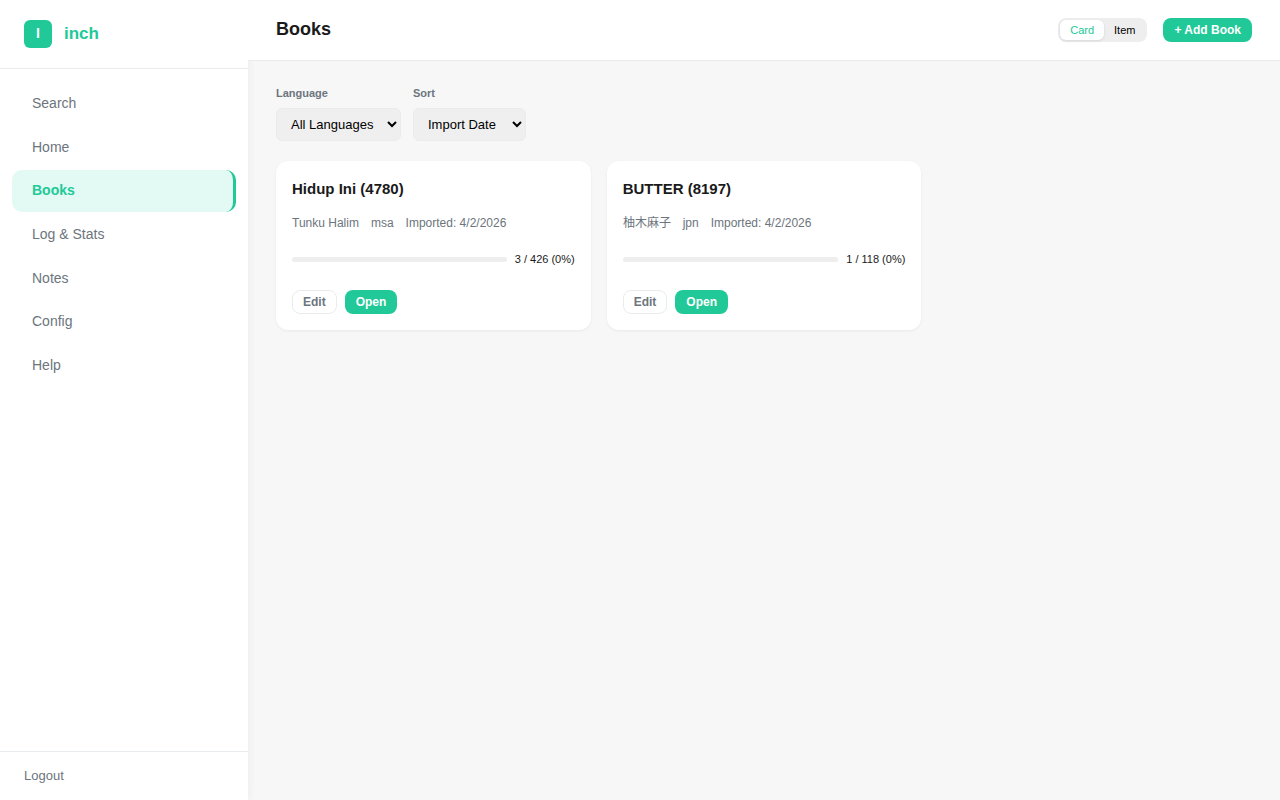

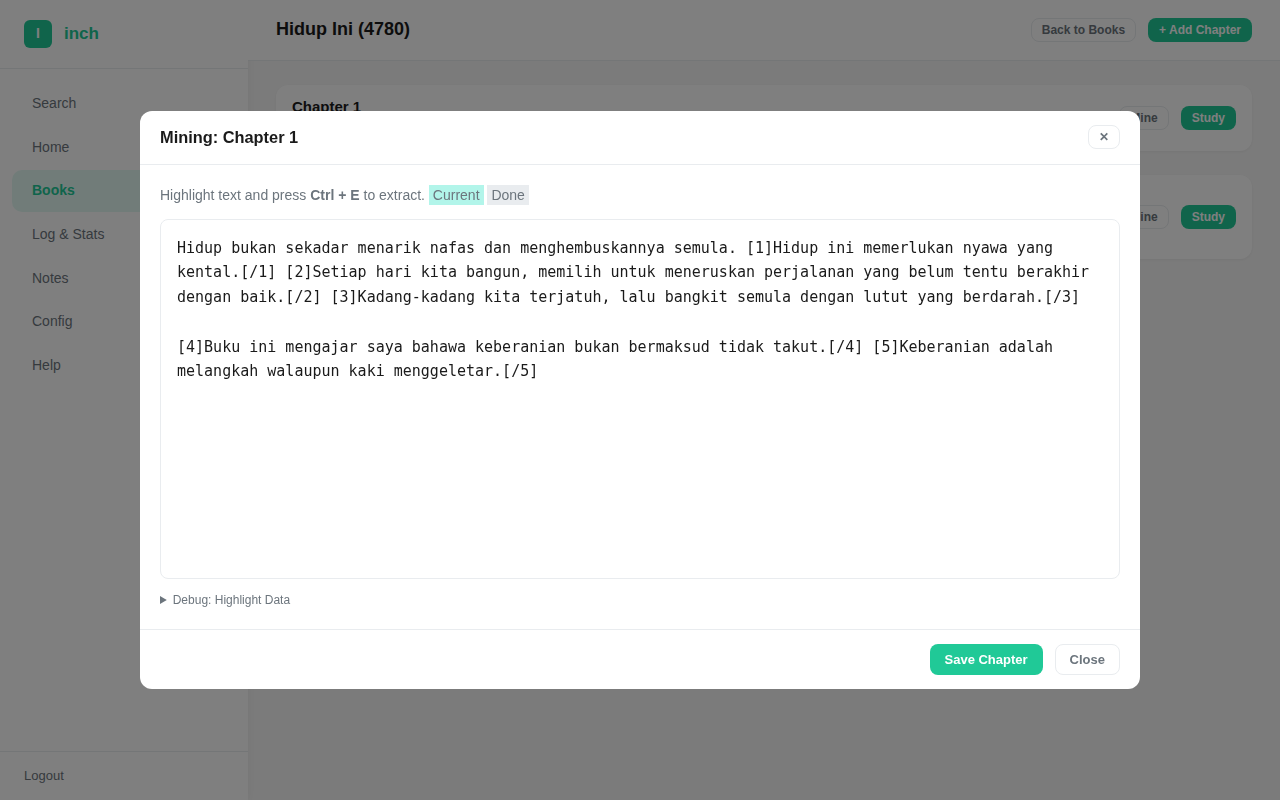

I have built several pieces of software for this. The earliest version was a Go web app called Inch, with a terminal-style interface. The workflow: paste a chapter in, tag sentences with markers, then manually annotate how each sentence should be broken down and translated.

Once annotated, I would call the Azure TTS API or Google Chirp TTS API to generate audio, then export study pages section by section and open them in a browser to study.

![After pasting a chapter into the editor, sentences are tagged with [n]…[/n] markers](inch-v1-editor.png)

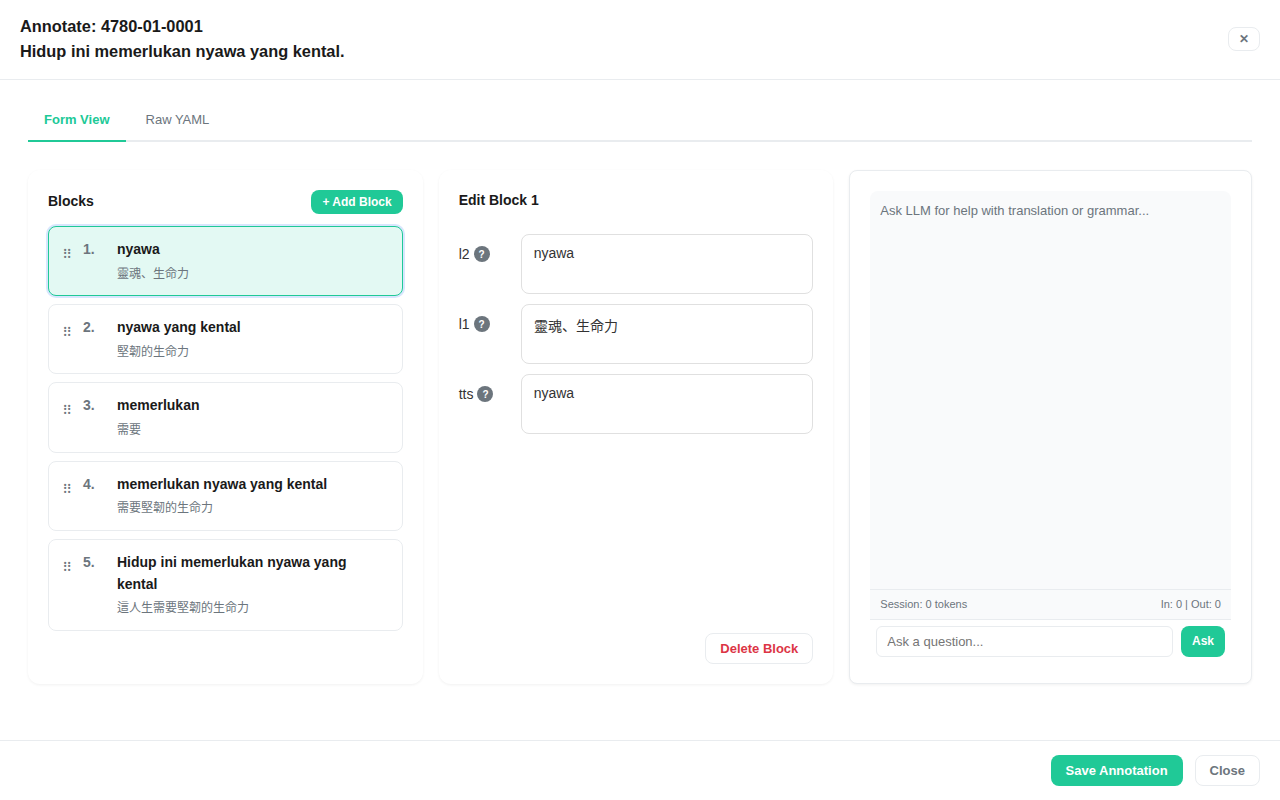

Splitting one sentence into ten fragments and writing ten sets of translations, across hundreds of sentences in a book—the annotation alone was a massive undertaking. I later built a second generation, Inch-GUI, with a graphical interface. Sentences could be highlighted with the mouse, annotation moved from hand-written config files to forms, and there was an AI chatroom for asking about unfamiliar words on the spot. The second generation was easier to use, but once I got going the core problem was still the same—every sentence still had to be split and translated by hand. Both versions ended up gathering dust before long.

It occurred to me that I could hand this entire time-consuming annotation job to Claude Code, so I set out to build the third generation of Inch.

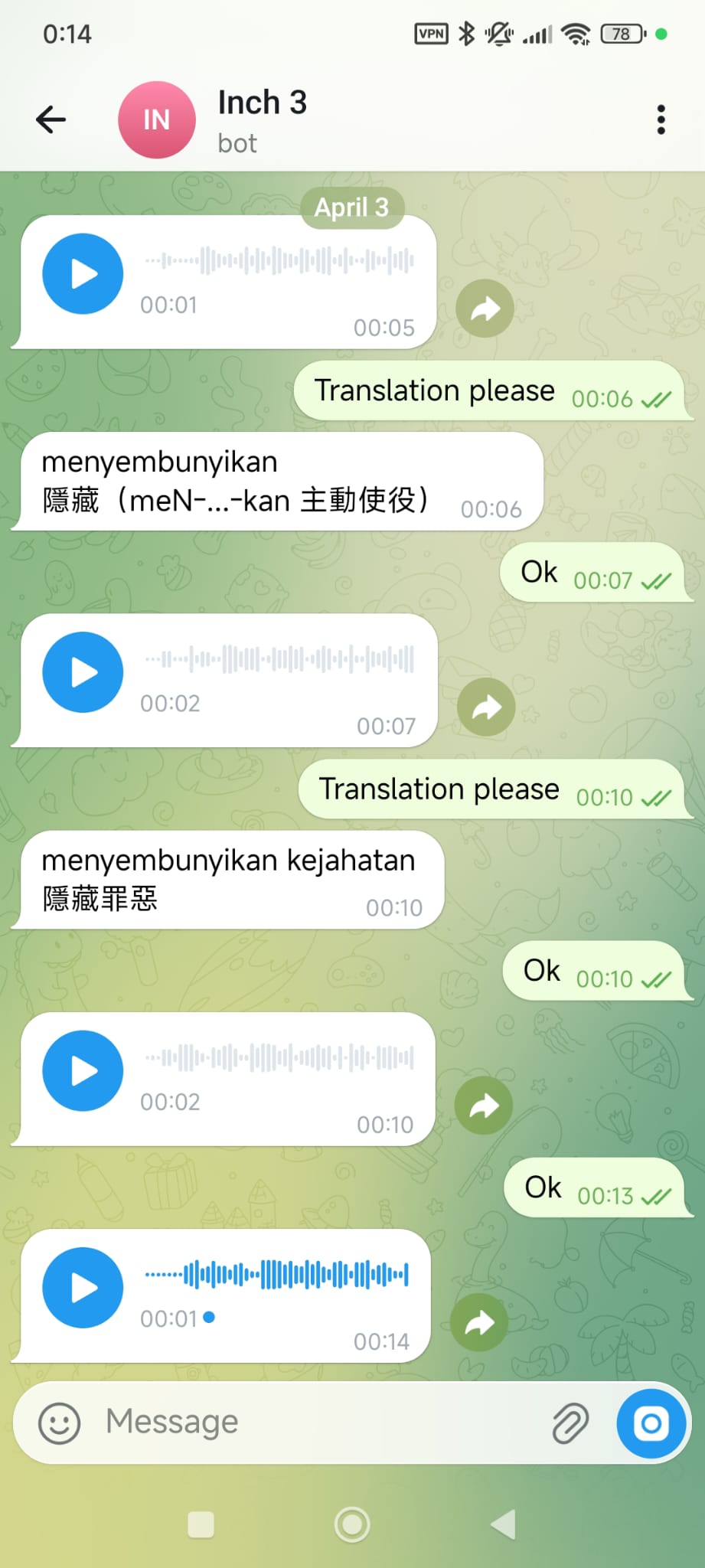

Inch 3 is no longer a web app—no server, no frontend (Telegram is my frontend). The whole system is just a folder, with each workflow defined as a skill:

/inch-connect-telegrambinds a Telegram bot/inch-setup-new-language-profileconfigures language and TTS API/inch-import-booksimports ebooks/inch-continuestarts a study session.

I explained my sentence decomposition method to Claude Code, and it followed this logic to produce knowledge/chunking-strategy.md.

Initial setup takes about half an hour. Once configured, I just type /inch-continue in the terminal, and Claude Code connects to Telegram to walk me through listening and reading ten sentences, starting from the smallest chunks.

After ten sentences, Claude Code records which chunks I understood and which I did not, storing them in a database as reference for future decomposition.

After using it for a while, the resistance to reading foreign books has genuinely dropped. Paragraphs dense with unfamiliar words that I used to skip over—now I hand them to Inch 3, listen through the fragments a few rounds, and I am through. I managed to finish the first chapter without pain.

The downside is that Inch 3 is tightly coupled to Claude Code—whether it would still work with a different model is an open question.

But I have an Anthropic subscription for now, so I might as well make the most of it!

If you are interested in the source code, you can check it out here: https://github.com/mhwang-1/inch-3