Give Claude Code a seat at the brainstorming table

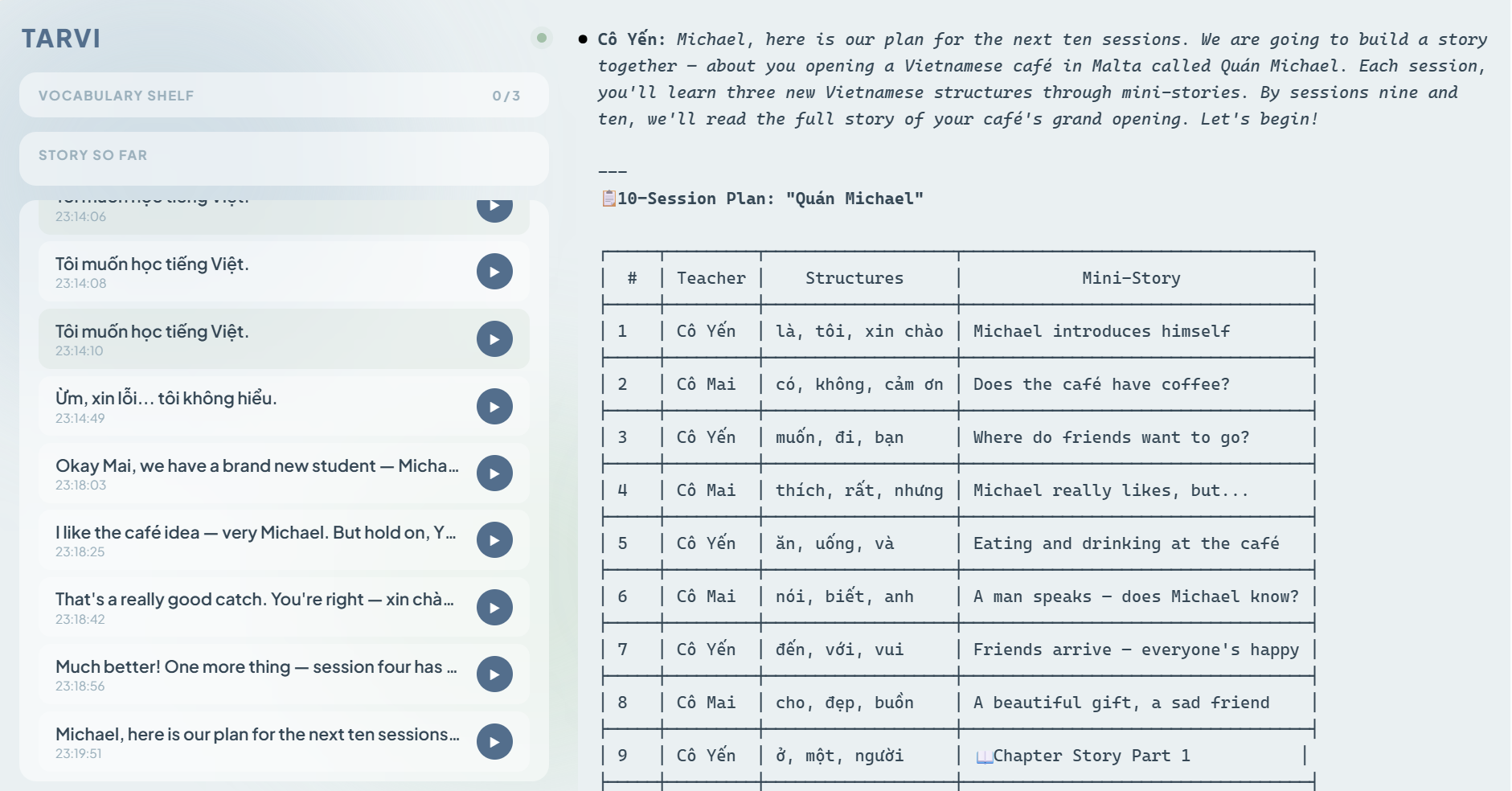

A few days back, I decided to create a TPRS (Teaching Proficiency through Reading and Storytelling) language tutoring app. I gave Claude Code an MCP server capable of voice output so it could play the role of a tutor. This post is not about the app itself (I will introduce that in another post once testing is complete), but rather a record of my development experience.

In the past, whenever I built a program, I was used to clearly defining the requirements first and then handing them over to Claude Code for execution. This time, I tried a different workflow: I listed the desired features and asked Claude Code to dispatch multiple “Agents” to brainstorm and propose feasible solutions. Then, I had another group of Agents review and critique those solutions. Finally, I had all the discussions summarised into a comprehensive HTML report.

The prompt was simple:

Please dispatch agents to discover solutions for the following items:

- Feature 1

- Feature 2

- Feature 3

......

Once done, please dispatch reviewers to challenge and rate the solutions. Then revise the solutions accordingly and write a comprehensive report in html format.

After reading the report, if there were no issues, I opened a completely new session and have Claude read this report to begin implementation. All the technical details remained within the report and would not vanish from the chat history. Even in a new session, Claude had enough background information to work effectively without me having to explain everything all over again.

The most unexpected part of this approach was that the Agents sometimes proposed solutions I hadn’t thought of—or even suggested that certain features did not need to be implemented at all, usually providing very good reasons. I realised that if I had specified the design in my head for Claude to execute, these alternatives would never be proposed. I would have just followed my original approach, blind to the side roads. I believe this is where the true value of this workflow lies: it was not about making requirements clear enough for Claude to execute, but truly allowing Claude to participate in the discussion, discover problems, challenge ideas, and ultimately find a better way together.

I saw a post on Reddit introducing an MCP called Ouroboros. This MCP uses a socratic questioning style to clarify requirements with the user step-by-step before handing them to Claude Code. Claude Code then theoretically self-tests and iterates until the program meets the requirements. Out of curiosity, I tried letting Ouroboros write the same TPRS tutor app. After Ouroboros asked me nearly thirty questions and began its operations, it burned through my entire Claude token quota—and the resulting program still did not run.

Meanwhile, the first generation of the version I brainstormed with Claude Code is already running smoothly.